From Business Analysis to DevOps and Data Analysis, Artificial Intelligence (AI) has been branching out to all IT segments. The latest application of AI, AIOps, helps IT teams automate tedious tasks and minimizes the chances for human error.

Learn what AIOps is, how organizations use it to enhance their IT workflows, and how to start using AIOps to improve your IT environment’s efficiency.

What is AIOps?

AIOps stands for Artificial Intelligence for IT Operations. AIOps is using AI and machine learning to monitor and analyze data from every corner of an IT environment. It uses algorithmic analysis of data to provide DevOps and ITOps teams with the means to make informed decisions and automate tasks. It’s vital to note that AIOps does not take people out of the equation. The use of AI fills in the operational gaps that commonly cause difficulties for humans.

Here’s a quick summary of what AIOps can do for an organization:

- Filter low-priority alerts from attention-worthy problems

- Help identify and quickly resolve system issues

- Automate repetitive tasks

- Detect system anomalies and deviations

- Put a stop to traditional team silos

It is challenging to assess how AIOps fits into the current IT landscape. It does not replace any existing monitoring, log management, or orchestration tools. AIOps exists at the junction of all the tools and domains, processing and integrating information across the entire IT infrastructure. By doing so, AIOps turns partial views into a synchronized, 360-degree picture of operations that is easy to keep track of.

AIOps environments are made out of sets of specialized algorithms narrowly focused on specific tasks.

These algorithms can pick out alerts from noisy event streams, identify correlations between issues, use historical data to auto-resolve reoccurring problems, etc. The cumulative effect of all these processes can do wonders for a business. It boosts system’s stability and performance while preventing issues from impairing critical operations.

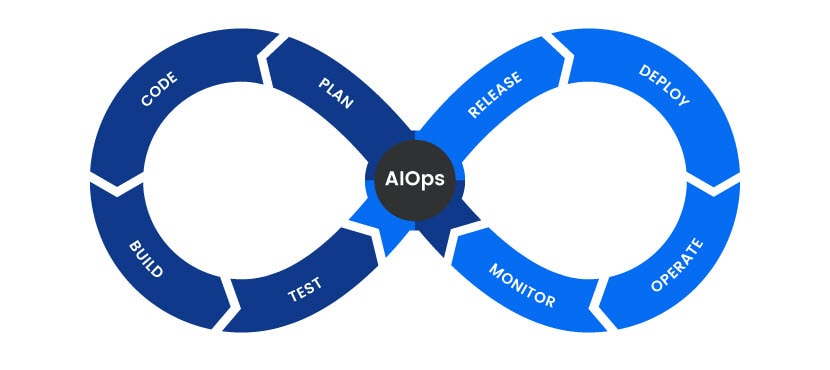

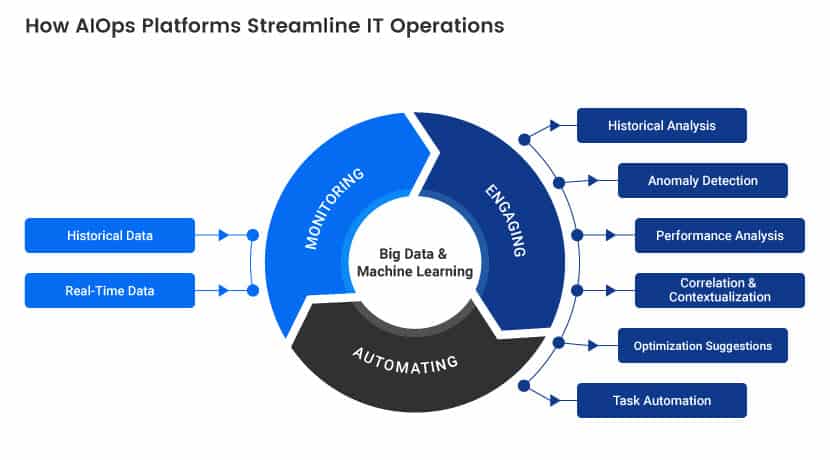

AIOps Architecture

AIOps has two central components: Big Data and Machine Learning.

It aggregates observational data from monitoring systems and engagement data from tickets, incidents, and event recordings. AIOps then performs comprehensive analytics of the gathered data and uses machine learning to figure out improvements and fixes. Think of it as an automation-driven continuous integration and deployment (CI/CD) for IT functions.

The entire process starts with monitoring. An essential aspect of an AIOps architecture, these tools can work with multiple sources and handle the immense volume and wide disparity of data in modern IT environments. Once it has access to all the info, an AIOps platform typically uses a data lake or a similar repository to collect and disperse the data.

After processing data, AIOps systems derive insights through various AI-fueled activities, such as analytics, pattern matching, natural language processing, correlation, and anomaly detection. Finally, AIOps makes extensive use of automation to act upon its findings.

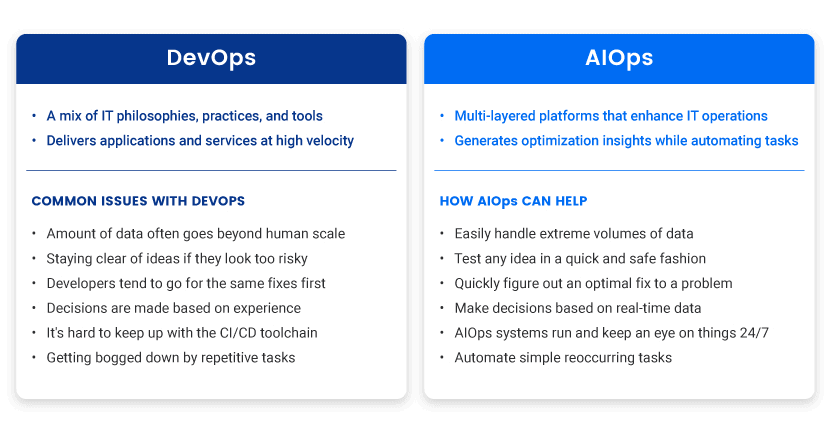

AIOps is not a separate entity from DevOps, but instead a set of technologies that complement the goals of DevOps engineers and help them embrace the scale and speed needed for modern development.

The world of DevOps revolves around agility and flexibility. AIOps platforms can help automate steps from development to production, projecting the effects of deployment and auto-responding to alterations in a dynamic IT environment.

AIOps can also help handle the velocity, volume, and variety of data generated by DevOps pipelines, sorting and making sense of them in real-time to keep app delivery stable and fast.

Here are the benefits AIOps can offer to DevOps engineers:

- Help understand the ins and outs of DEV, QA, and production environments.

- Identify optimal fixes

- Test ideas in a quick and safe fashion

- Automate repetitive tasks

- Minimize human error

- Determine improvements and optimizations

AIOps ensures the use of DevOps engineers goes towards complex tasks that cannot be automated. It allows humans to focus on areas of development that drive maximum profitability for a business.

Find the best AI processors on the market.

Benefits of AIOps

Depending on individual operations and workflows, certain benefits of AIOps can be more impactful than others. Nevertheless, here’s a breakdown of key advantages that come with deploying AIOps:

Noise reduction

By identifying low-priority alerts through machine learning and pattern recognition, AIOps helps IT specialists comb through high volumes of event alarms without getting caught up in irrelevant or false alerts.

Noise reduction saves a lot of time, but it also allows business-affecting incidents to be spotted and resolved before they cause damage.

Unified view of the IT environment

AIOps correlates data across various data sources and analyzes them as one. AIOps eliminates information silos and gives a contextualized vision across the entire IT estate. That allows all teams to be on the same holistic page, turning the entire system into a well-oiled machine.

Meaningful data analysis

AIOps brings all the data across the system into one place, enabling more meaningful analysis that is quick due to AI and thorough as it leaves no digital stone unturned.

By bringing all the data together and accurately analyzing it, AIOps makes a substantial impact on the decision-making front.

Time-saving process automation

Thanks to knowledge recycling and root cause analysis, AIOps automates simple recurring operations.

Upon discovering an issue, AIOps react to it in real-time. Depending on the nature of the problem, it initiates an action or moves to the next step without the need for human intervention.

A proactive approach to problem management

AIOps analyze historical data in search of patterns in system behavior. AiOps is a great way to stay ahead of future incidents, enabling teams to fix root causes and run a more seamless system.

Between automation, problem-solving, and in-depth analytics, AIOps tools make workflows quicker and more consistent. Consequently, it reduces the chance for human errors to occur. Meanwhile, IT teams get to focus on their areas of expertise instead of having to deal with low-value tasks that distract and slow them down.

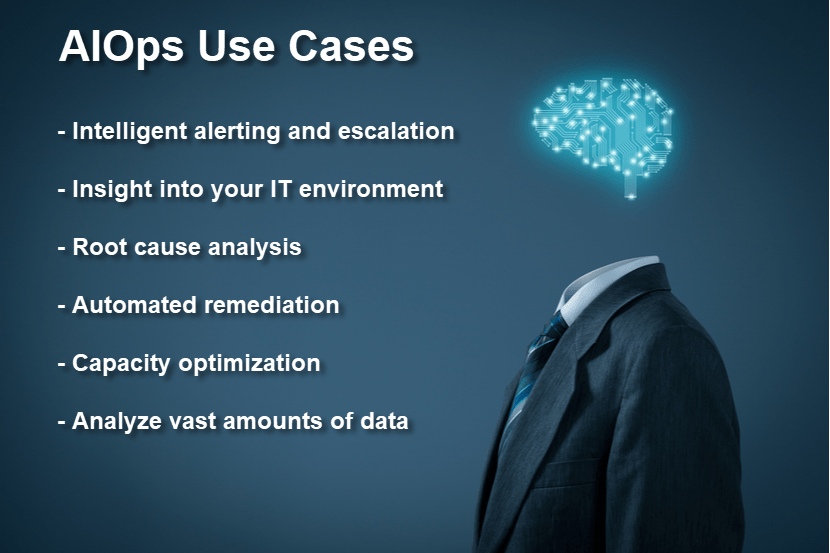

AIOps Use Cases

AIOps continually evolves and offers new functionalities, and currently, it is used in the following use cases:

Intelligent alert monitoring and escalation

By ingesting data from all sections of an IT environment, AIOps tools stop alert storms from causing domino effects through connected systems. It reduces alert fatigue and helps accurately prioritize issues.

Cross-domain situational understanding

AIOps create causality/relationships while aggregating data, granting a continuous clear line of sight across the entire IT estate.

Automatic root cause analysis

Once an alert happens, an AIOps platform presents top suspected causes, as well as the evidence that led it to such a conclusion.

Automatic remediation of IT environment issues

AIOps automate remediation for problems that already transpired on several occasions. They use historical data from past issues to identify them and either offer the best solution or remedy the issues outright.

Monitor application uptime

By proactively monitoring raw utilization, bandwidth, CPU, memory, and more, AI-based analytics can be used to increase overall application uptime.

Cohort analysis

While humans struggle with it, analyzing vast amounts of data is a forte of AIOps that can handle large systems with ease.

Organizations Adopting AIOps

Different reasons push organizations towards AIOps. Organizations at the forefront of adopting artificial intelligence in IT operations are enterprises with large environments, cloud-native SMEs, organizations with overburdened DevOps teams, and companies with hybrid cloud and on-prem environments.

Most organizations hail the same positive effects following deployment, as evidenced by a survey performed by OpsRamp:

- Around 87% of organizations said that the AIOps-powered solution delivered good results

- The most common use case was Intelligent alerting, mentioned by 67% of organizations, followed by root cause analysis which was highlighted by 61% of the respondents

- Over 50% of organizations mentioned anomaly/threat detection, capacity optimization, and incident auto-remediation as crucial upgrades to their system(s)

- Over 85% of polled organizations said they were able to automate tedious tasks thanks to AIOps

- 77% of organizations claim the number of open incident tickets went down upon implementing AIOps

It is unsurprising that there’s been an 83% increase in the number of organizations currently deploying or thinking about deploying AIOps since 2018. Forward-thinking companies see AIOps as an opportunity to move past brittle rules-based processes, information silos, and the excess of repetitive manual activities.

While evaluating the benefits of AIOps, it’s essential to look beyond its ability to reduce costs directly. By preventing disruptions of critical digital services, as well as accelerating detection and resolution, AIOps paves the way towards better user experience and bumps up customer retention. It also leaves ample room for an IT team to innovate and focus on value-packed activities, which keeps top talent happy and away from competitors.

Implement & Get Started With Artificial Intelligence for IT Operations

The shift towards AIOps starts at the drawing board. Here are pointers in case you’re weighing whether AIOps is the right choice for your team:

Learn about AI

You should know as much about AI-powered operations as humanly possible. This article is a great start, but you should consider hiring an IT consultant to better gauge your system’s fit with AIOps. You also want to familiarize yourself with the capabilities of AI and ML to get a better sense of what these technologies can offer.

Identify Time-Consuming IT Tasks

Identify where the bulk of the team’s time and effort is being poured. If you conclude that most of the energy is going towards solving mundane and repetitive tasks, you’re likely a prime candidate for implementing AIOps.

Consider other applications

Data management is a massive component of AIOps that’s not reserved exclusively for your IT department. Business analytics and statistical analysis are key for any modern organization, so check if you have needs for AIOps on those fronts as well.

Automate one process at a time

There’s no need to go all-in on AIOps immediately. Identify your highest-priority problem and assess how this technology could solve it. Deploy the use of AI there first and later consider expanding it across other systems if it yields results.

Measure speed and efficiency

To know whether hefty investments in AIOps are paying off, you need to know which metrics you’ll keep an eye on. Ways of measuring ROI and success vary on a business-to-business basis, but most metrics involve measuring improvements in the speed and efficiency of processes.

Takeaway

The global AIOps market is projected to grow to $11.02 billion by 2023, enjoying a 34% combined annual growth rate (CAGR) in the meantime.

Interest in benefits of AIOps picked up as organizations began to seek ways to manage the velocity, volume, and variety of digital data that goes beyond human scale. The field demonstrates a clear ability to improve customer and talent retention rates. It is expected that the number of companies looking to implement artificial intelligence in IT operations will skyrocket.

AIOps is here to stay. Its ability to allow ITOps to focus on solving critical and high-value issues instead of “keeping the lights on” is game-changing. It’s only a matter of time before AIOps becomes the industry norm.